Problem: Givet 2 proces I og J skal du skrive et program, der kan garantere gensidig udelukkelse mellem de to uden yderligere hardwarestøtte.

Spild af CPU -urcyklusser

I lægmandsmæssige termer, da en tråd ventede på sin tur, endte den i lang tid, der testede tilstanden til millioner af gange pr. Sekund og således gjorde unødvendig beregning. Der er en bedre måde at vente på, og det er kendt som 'udbytte' .

For at forstå, hvad det gør, har vi brug for at grave dybt ned i, hvordan procesplanlæggeren fungerer i Linux. Idéen, der er nævnt her, er en forenklet version af planlæggeren Den faktiske implementering har masser af komplikationer.

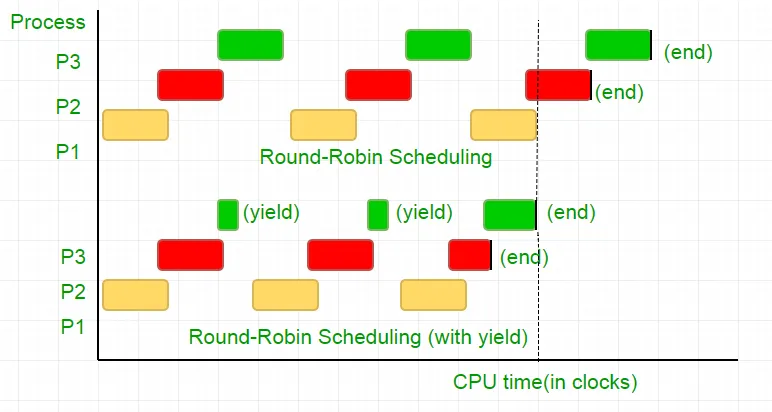

Overvej følgende eksempel

Der er tre processer P1 P2 og P3. Proces P3 er sådan, at den har en stykke tid, der ligner den i vores kode, der ikke gør så nyttig beregning, og den findes fra løkken kun, når P2 afslutter sin udførelse. Scheduleren sætter dem alle i en rund Robin -kø. Sig nu, at processorens urhastighed er 1000000/sek, og den tildeler 100 ure til hver proces i hver iteration. Derefter køres først P1 i 100 ure (0,0001 sekunder) derefter P2 (0,0001 sekunder) efterfulgt af P3 (0,0001 sekunder) nu, da der ikke er flere processer, denne cyklus gentager, indtil P2 slutter, og derefter efterfulgt af P3's udførelse og til sidst dens afslutning.

Dette er et komplet spild af de 100 CPU -urcyklusser. For at undgå dette opgiver vi gensidigt CPU -tidsskiven, dvs. udbytte, der i det væsentlige slutter denne tidsskive, og planlæggeren henter den næste proces, der skal køres. Nu tester vi vores tilstand en gang, så opgiver vi CPU'en. I betragtning af vores test tager 25 urcyklusser, sparer vi 75% af vores beregning i en tidsskive. At sætte dette grafisk

I betragtning af processorurets hastighed som 1MHz er dette meget besparelse!.

Forskellige distributioner giver forskellige funktioner for at opnå denne funktionalitet. Linux leverer Sched_yield () .

void lock(int self) { flag[self] = 1; turn = 1-self; while (flag[1-self] == 1 && turn == 1-self) // Only change is the addition of // sched_yield() call sched_yield(); }

Hukommelseshegn.

Koden i tidligere tutorial kunne have arbejdet på de fleste systemer, men det var ikke 100% korrekt. Logikken var perfekt, men de fleste moderne CPU'er anvender ydelsesoptimeringer, der kan resultere i eksekvering uden for orden. Denne ombestilling af hukommelsesoperationer (belastninger og butikker) går normalt upåagtet hen inden for en enkelt eksekveringstråd, men kan forårsage uforudsigelig opførsel i samtidige programmer.

Overvej dette eksempel

while (f == 0); // Memory fence required here print x;

I ovenstående eksempel betragter kompilatoren de 2 udsagn som uafhængige af hinanden og forsøger således at øge kodeeffektiviteten ved at ombestille dem, hvilket kan føre til problemer for samtidige programmer. For at undgå dette placerer vi et hukommelseshegn for at give antydning til kompilatoren om det mulige forhold mellem udsagnene på tværs af barrieren.

Så rækkefølgen af udsagn

flag [self] = 1;

drej = 1-selv;

mens (drej tilstandskontrol)

udbytte();

skal være nøjagtigt den samme for at låsen kan fungere, ellers ender det i en dødvande.

For at sikre, at disse kompilatorer giver en instruktion, der forhindrer bestilling af udsagn på tværs af denne barriere. I tilfælde af gcc. __sync_synchronize () .

Så den ændrede kode bliver

Fuld implementering i C:

// Filename: peterson_yieldlock_memoryfence.cpp // Use below command to compile: // g++ -pthread peterson_yieldlock_memoryfence.cpp -o peterson_yieldlock_memoryfence #include

// Filename: peterson_yieldlock_memoryfence.c // Use below command to compile: // gcc -pthread peterson_yieldlock_memoryfence.c -o peterson_yieldlock_memoryfence #include

import java.util.concurrent.atomic.AtomicInteger; public class PetersonYieldLockMemoryFence { static AtomicInteger[] flag = new AtomicInteger[2]; static AtomicInteger turn = new AtomicInteger(); static final int MAX = 1000000000; static int ans = 0; static void lockInit() { flag[0] = new AtomicInteger(); flag[1] = new AtomicInteger(); flag[0].set(0); flag[1].set(0); turn.set(0); } static void lock(int self) { flag[self].set(1); turn.set(1 - self); // Memory fence to prevent the reordering of instructions beyond this barrier. // In Java volatile variables provide this guarantee implicitly. // No direct equivalent to atomic_thread_fence is needed. while (flag[1 - self].get() == 1 && turn.get() == 1 - self) Thread.yield(); } static void unlock(int self) { flag[self].set(0); } static void func(int s) { int i = 0; int self = s; System.out.println('Thread Entered: ' + self); lock(self); // Critical section (Only one thread can enter here at a time) for (i = 0; i < MAX; i++) ans++; unlock(self); } public static void main(String[] args) { // Initialize the lock lockInit(); // Create two threads (both run func) Thread t1 = new Thread(() -> func(0)); Thread t2 = new Thread(() -> func(1)); // Start the threads t1.start(); t2.start(); try { // Wait for the threads to end. t1.join(); t2.join(); } catch (InterruptedException e) { e.printStackTrace(); } System.out.println('Actual Count: ' + ans + ' | Expected Count: ' + MAX * 2); } }

import threading flag = [0 0] turn = 0 MAX = 10**9 ans = 0 def lock_init(): # This function initializes the lock by resetting the flags and turn. global flag turn flag = [0 0] turn = 0 def lock(self): # This function is executed before entering the critical section. It sets the flag for the current thread and gives the turn to the other thread. global flag turn flag[self] = 1 turn = 1 - self while flag[1-self] == 1 and turn == 1-self: pass def unlock(self): # This function is executed after leaving the critical section. It resets the flag for the current thread. global flag flag[self] = 0 def func(s): # This function is executed by each thread. It locks the critical section increments the shared variable and then unlocks the critical section. global ans self = s print(f'Thread Entered: {self}') lock(self) for _ in range(MAX): ans += 1 unlock(self) def main(): # This is the main function where the threads are created and started. lock_init() t1 = threading.Thread(target=func args=(0)) t2 = threading.Thread(target=func args=(1)) t1.start() t2.start() t1.join() t2.join() print(f'Actual Count: {ans} | Expected Count: {MAX*2}') if __name__ == '__main__': main()

class PetersonYieldLockMemoryFence { static flag = [0 0]; static turn = 0; static MAX = 1000000000; static ans = 0; // Function to acquire the lock static async lock(self) { PetersonYieldLockMemoryFence.flag[self] = 1; PetersonYieldLockMemoryFence.turn = 1 - self; // Asynchronous loop with a small delay to yield while (PetersonYieldLockMemoryFence.flag[1 - self] == 1 && PetersonYieldLockMemoryFence.turn == 1 - self) { await new Promise(resolve => setTimeout(resolve 0)); } } // Function to release the lock static unlock(self) { PetersonYieldLockMemoryFence.flag[self] = 0; } // Function representing the critical section static func(s) { let i = 0; let self = s; console.log('Thread Entered: ' + self); // Lock the critical section PetersonYieldLockMemoryFence.lock(self).then(() => { // Critical section (Only one thread can enter here at a time) for (i = 0; i < PetersonYieldLockMemoryFence.MAX; i++) { PetersonYieldLockMemoryFence.ans++; } // Release the lock PetersonYieldLockMemoryFence.unlock(self); }); } // Main function static main() { // Create two threads (both run func) const t1 = new Thread(() => PetersonYieldLockMemoryFence.func(0)); const t2 = new Thread(() => PetersonYieldLockMemoryFence.func(1)); // Start the threads t1.start(); t2.start(); // Wait for the threads to end. setTimeout(() => { console.log('Actual Count: ' + PetersonYieldLockMemoryFence.ans + ' | Expected Count: ' + PetersonYieldLockMemoryFence.MAX * 2); } 1000); // Delay for a while to ensure threads finish } } // Define a simple Thread class for simulation class Thread { constructor(func) { this.func = func; } start() { this.func(); } } // Run the main function PetersonYieldLockMemoryFence.main();

// mythread.h (A wrapper header file with assert statements) #ifndef __MYTHREADS_h__ #define __MYTHREADS_h__ #include

// mythread.h (A wrapper header file with assert // statements) #ifndef __MYTHREADS_h__ #define __MYTHREADS_h__ #include

import threading import ctypes # Function to lock a thread lock def Thread_lock(lock): lock.acquire() # Acquire the lock # No need for assert in Python acquire will raise an exception if it fails # Function to unlock a thread lock def Thread_unlock(lock): lock.release() # Release the lock # No need for assert in Python release will raise an exception if it fails # Function to create a thread def Thread_create(target args=()): thread = threading.Thread(target=target args=args) thread.start() # Start the thread # No need for assert in Python thread.start() will raise an exception if it fails # Function to join a thread def Thread_join(thread): thread.join() # Wait for the thread to finish # No need for assert in Python thread.join() will raise an exception if it fails

Produktion:

Thread Entered: 1

Thread Entered: 0

Actual Count: 2000000000 | Expected Count: 2000000000